|

5/1/2023 0 Comments Datagrip import sql dump

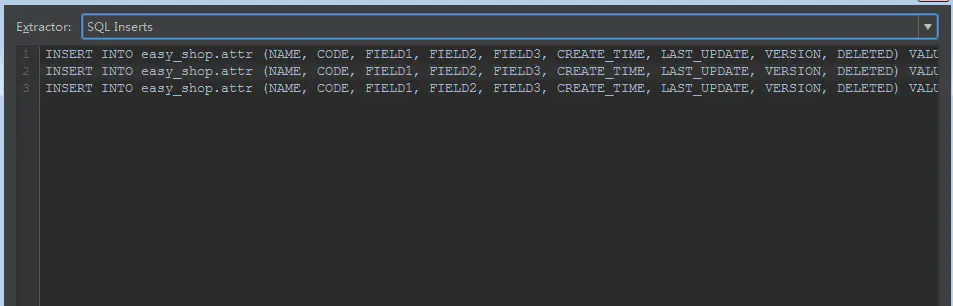

That's it! You now have access to exporting rows as batches. Create a new file called "SQL Batch Multi-Line " (The double extension is important for syntax highlighting).Additionally, in most cases, the difference in performance is very minor between batched inserts and 1 large insert. Allowing normal queries to run at the same time as the set of batched queries. Inserting in batches gives you a significant performance boost, while still preventing tables or rows from getting locked for too long. This is particularly harmful in production environments, especially when you need to comply with an SLA. Table & row locks block all other queries that modify data (causing a desync) and even some queries that read data (causing request lag). One large insert is technically faster than batched inserts however, this often comes at the cost of locking tables/rows for extended periods of time. This makes the individual inserts very slow, while batches avoid almost all of this overhead.

In SQL, each query is parsed and executed separately which causes significant overhead between each query. In short, better performance and limited table/row locks when inserting thousands, millions, or even billions of rows.īatches perform orders of magnitude faster than individual inserts. I will also take a moment to say this is my first post and would love any feedback on improving it.

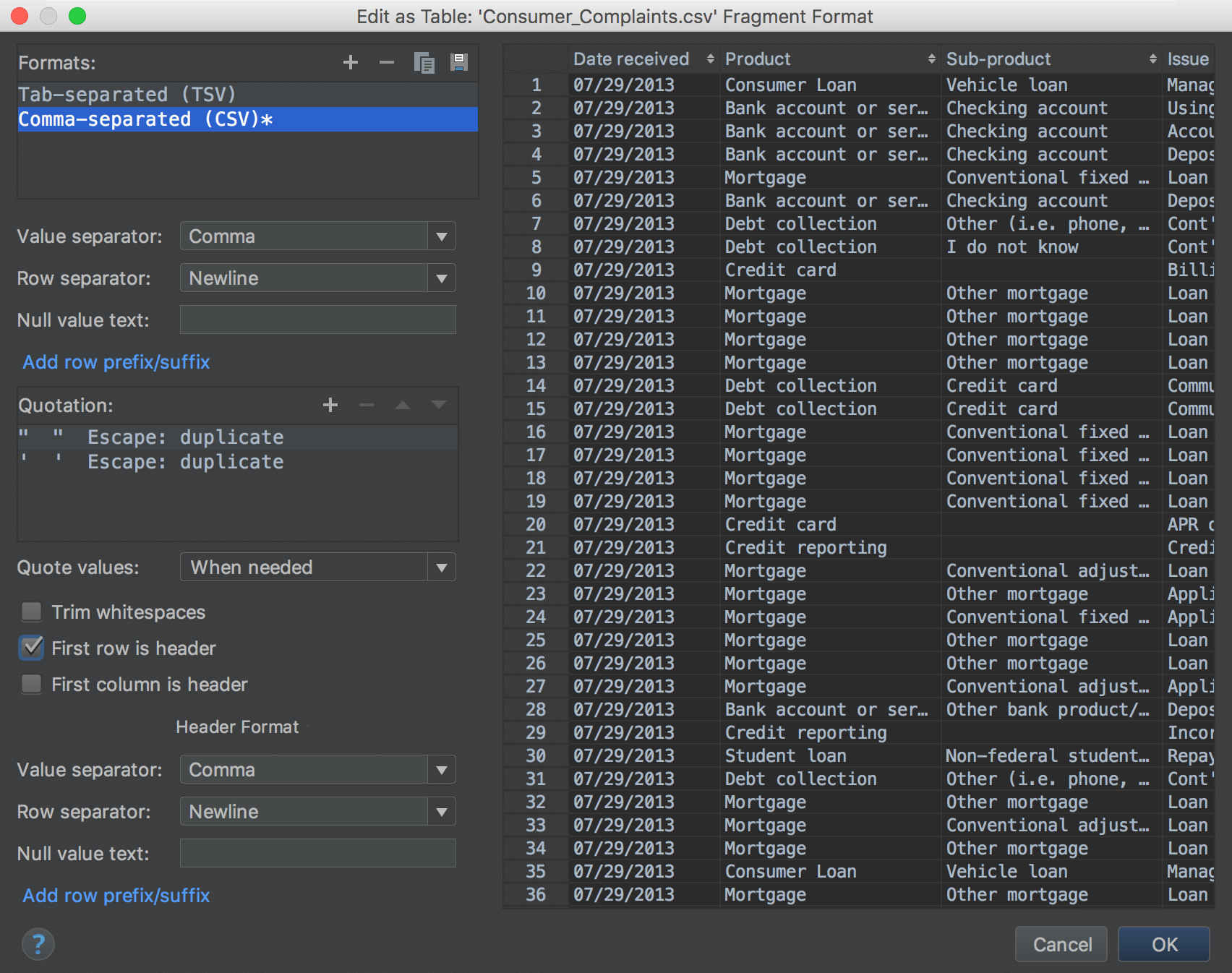

While I could go on with my love/hate relationship with DG's features, (it's mostly love) it is fortunately easy enough to manually add some functionality. This isn't so much a "great new feature" as it is a missing feature from DataGrip.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed